Agentic-Led Growth: A New Model for How B2B Companies Grow

How we are rebuilding HubSpot's GTM around AI agents, and what every B2B team can learn from it

Everyone is frantically adding AI to their GTM. Very few are running a true agentic-first GTM. It’s the difference between integrating AI and reorienting your GTM around it.

We’ve seen this happen before.

When factories first got access to electricity in the late 1800s, most of them didn’t redesign their operations. They just replaced the steam engine with an electric motor and kept everything else the same: the layout, the workflows, the staffing model. Productivity barely moved. For decades, economists couldn’t find electricity’s impact in the output data, and they called it the productivity paradox.

The factories that eventually transformed weren’t the ones that got electricity first. They were the ones who rebuilt the floor around it. New layouts. New workflows. New roles. Once that happened, productivity compounded.

Most companies right now are replacing the steam engine with an electric motor. A few are rebuilding the floor.

I recently took on a new role at HubSpot, leading Agentic GTM and Systems, the team responsible for building the agent-first go-to-market engine you’re about to read about. It’s the kind of role that didn’t exist six months ago, which tells you something about where this is heading.

What Agentic-Led Growth actually is

Every era of B2B growth has had a primary motion.

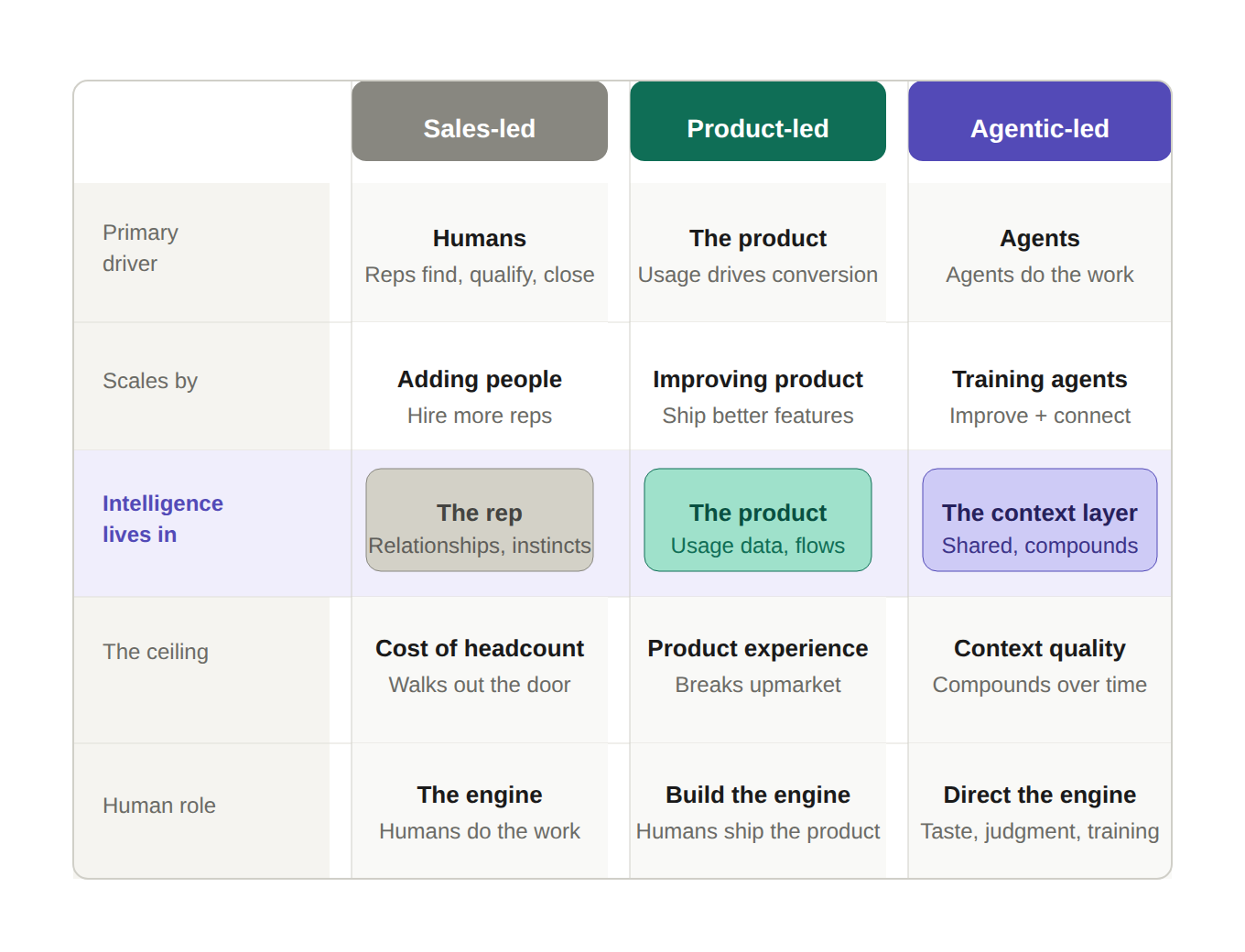

In sales-led growth, humans did the work. Reps found, qualified, and closed. The system scaled by adding people. In product-led growth, the product did the work. Usage, activation, and viral loops drove acquisition and expansion. The system scaled by improving the product.

Both models followed the same underlying logic: identify the most powerful driver of revenue and build your entire GTM motion around it.

There is a second thread running through these models that gets less attention. Each one also represents a different answer to the question of where intelligence lives.

In sales-led growth, intelligence lives in the rep. Relationships, instincts, playbooks, and pattern recognition built over years of selling. It is powerful, and it is deeply human. It is also expensive to hire, hard to scale, and walks out the door when the rep does.

In product-led growth, intelligence moves into the product. Usage data, activation flows, growth loops. It compounds as more users move through the system and scales without adding headcount. But PLG has a natural boundary: it works when the product experience itself is enough to drive the next step. The moment your growth depends on customers who need more than the product can give them, a commercial conversation, a tailored onboarding, a relationship that exists outside the app, the model runs out of road. That is why every PLG company that moves upmarket eventually builds a sales motion on top of it.

In Agentic-Led Growth, intelligence lives in the context layer. Shared across every agent, every stage, every customer interaction. It does not leave when a rep does. It does not plateau when the product reaches its activation limits. It compounds with every interaction the system handles.

That is the lineage. Each model in that sequence is not an improvement on the one before it. It is a different answer to the same question: what is the primary driver of revenue, and how do you build your entire GTM motion around it? Sales-led companies did not become product-led by adding a free trial. They rebuilt the motion. Product-led companies will not become agentic-led by deploying a few agents. The same rebuild is required.

Agentic-Led Growth is a GTM model where AI agents own the repeatable, context-driven work across every stage of the customer lifecycle, acquiring demand, engaging it, monetising it, and delighting customers once they buy. Agents do this autonomously where the task allows, and in collaboration with humans where taste and judgment are required. Humans contribute three things the system fundamentally depends on: taste, to set the standard agents execute to; judgment, to make the calls no amount of data can fully determine; and continuous agent training, to make the system smarter over time. The humans who understand that last part compound their impact in ways that go far beyond personal productivity.

Three modes coexist in a mature Agentic-Led GTM:

Fully autonomous. The task is well-defined, the right answer is derivable from context and data, and human taste and judgment add no meaningful value at the point of execution. The agent owns it entirely. The human’s role was upstream, setting the standards, training the agent, and defining what good looks like. In practice: this is the Inbound Agent we built at HubSpot that qualifies visitors, handles competitive questions, and books meetings with no human in the loop. The AEO Agent ensures HubSpot appears in AI-generated answers before a buyer ever enters the funnel. The Customer Agent is resolving 60% of support interactions without escalation.

Agent-primary, human-closing. The agent owns everything up to the moment where taste or judgment becomes the deciding factor. The human doesn’t do the groundwork. They arrive at the moment that matters, the sales call, the escalation, the strategic conversation, with more context than they’ve ever had, having spent none of their time getting there. In practice, this is the Prospecting Agent we built that identifies intent signals, builds the personalized sequence, timing the outreach, and creates the task at precisely the right moment. The rep’s energy goes entirely into the conversation that follows. Neither works as well without the other, but the agent gets the rep to the conversation, and the rep closes it.

Agent-amplified human judgment. The task requires human judgment throughout, but agents continuously surface better context, better signals, and better timing so that judgment is sharper and better informed at every step. The human still owns the outcome. The agent makes them better at delivering it. In practice, this is how we’ve integrated AI into our sales org. AI surfaces the right plays, the right content, the right risk signals, so the rep’s instincts are more accurate.

The consistent thread across all three: agents handle the work that doesn’t require a human so that humans can focus entirely on the work that does. And every time a human exercises taste or judgment within the system, refining an agent-drafted sequence, overriding a recommendation, rewriting a brief, that signal feeds back into the system and makes it better. In a copilot model, a good rep makes themselves more productive. In an Agentic-Led GTM, a good rep makes the entire system smarter.

That is the rebuilt factory floor. And that is why it compounds.

At HubSpot, we’ve been moving our GTM to agentic-led over the past 12 months. A quick breakdown of how this works across the customer journey.

Acquire — finding the right demand before your competitors do

The Demand Agent identifies ICP-match companies, enriches contacts from multiple data sources, and generates a prospect value score predicting both likelihood to close and expected ARR. It added 345,000 accounts to our total addressable market, accounts that reps would otherwise have lacked sufficient data to pursue.

The lesson most teams miss: agents are only as good as the data they run on. Before we got results from the Demand Agent, we invested heavily in data quality, enriching contacts, removing defunct company records, building golden record standards across the CRM. It was unglamorous work that took longer than anyone wanted. It was also the work that made everything else possible. If your CRM is messy, your agents will be confidently wrong at scale. Clean data is not a nice-to-have prerequisite. It is the foundation.

The AEO Agent — Answer Engine Optimisation — makes HubSpot visible and credible in AI-generated responses from tools like ChatGPT and Google AI mode. Qualified leads from AI-generated answers grew 1,850% between Q1 2025 and Q1 2026. Those leads convert at up to 3x the rate of traditional search.

The lesson: a meaningful and growing percentage of your buyers are now asking AI tools for software recommendations instead of Googling. If you are not visible in those responses, you do not exist for that buyer. It is a channel that is already growing faster than anything else in our acquisition mix.

Engage — converting demand with the right combination of agents and humans

The Inbound Agent qualifies visitors, handles competitive questions, uses propensity scoring to identify buying intent, and books meetings directly with sales reps. It now handles 82% of all inbound chats with zero human involvement.

That number did not start at 82%. It compounded through relentless iteration: model upgrades, prompt optimisation, expanding page coverage. One finding that surprised us: rewriting the agent’s instructions to handle one task at a time delivered a bigger performance jump than upgrading to a more powerful AI model. Better prompts beat better models. That is not what most teams expect when they start optimising.. The lesson: measure the slope of improvement, not launch-day performance. An agent that improves two to three points per month for a year becomes something different entirely. The teams that shut agents down after 30 days because the early numbers were modest missed the curve entirely.

The Prospecting Agent orchestrates outreach across all channels, tracking intent signals, generating personalised multi-touch sequences, creating tasks for reps at precisely the right moment. It books over 10,000 meetings per quarter.

The lesson here was humbling. The original assumption was that AI-personalised email sequences would do most of the work. They didn’t. Only a small percentage of meetings get booked through email alone. What actually worked was the combination: automated outreach coupled with a human sales call drove 20% higher meeting conversion than either channel alone. The agent handles timing, personalisation, and the groundwork. The rep handles the conversation. Neither works as well without the other. Agents are best at removing latency from the process. Humans are best at what follows.

Monetise — closing and expanding revenue with better-informed humans

In deals where AI was used, the win rate is 13% higher.

This is the clearest example of agent-amplified human judgment in our stack. AI does not run the deal. It surfaces the right plays, the right content, the right risk signals at the right moment, so the rep’s instincts are sharper and better timed. The rep still owns the relationship. The rep still makes the call. The agent makes them better at both.

The lesson: in the monetisation stage, the agent’s job is not to replace human judgment. It is to make human judgment more accurate. A rep using AI agents is not faster at doing the same job. They are better at doing a harder one. That distinction matters when you are designing what your agents do and what your humans do at this stage.

The invisible layer that makes it work

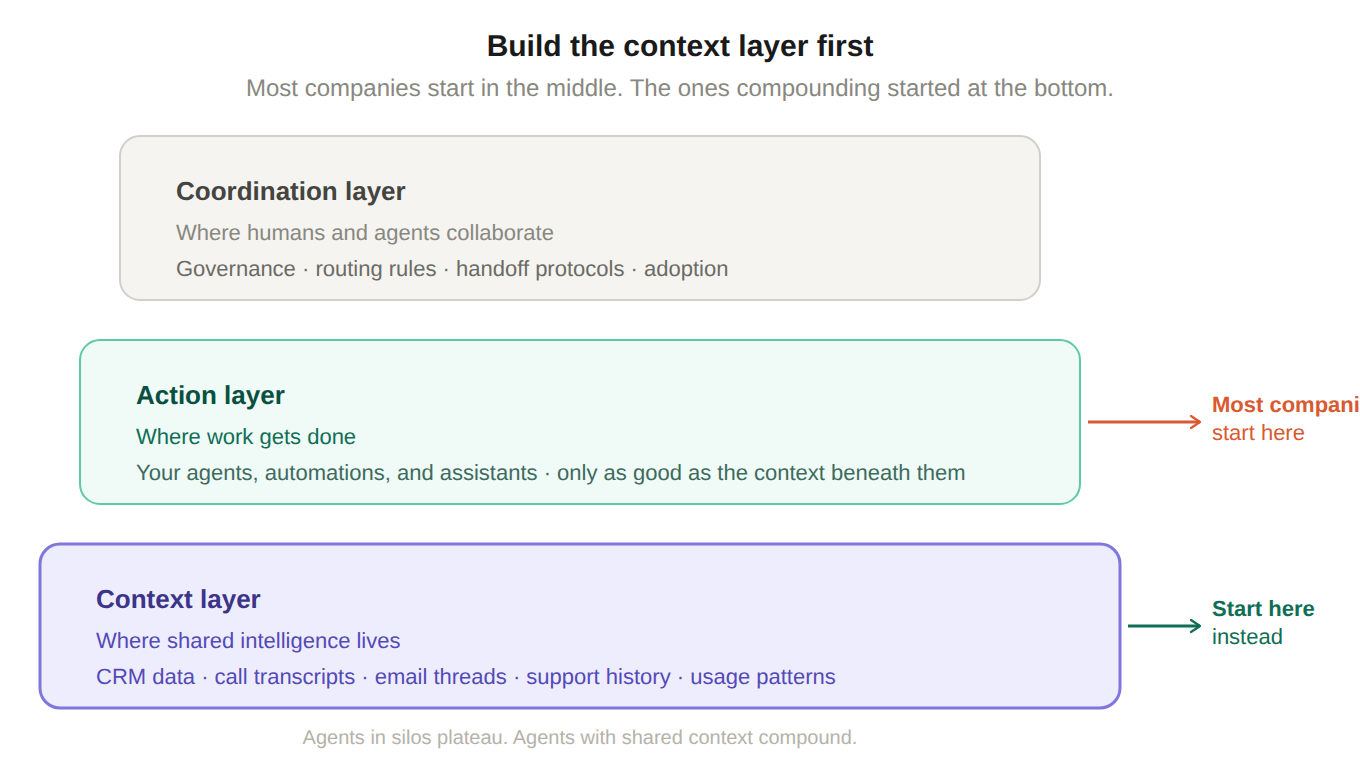

There’s more to agentic-led growth than the agents. You have to think about your AI in 3 layers.

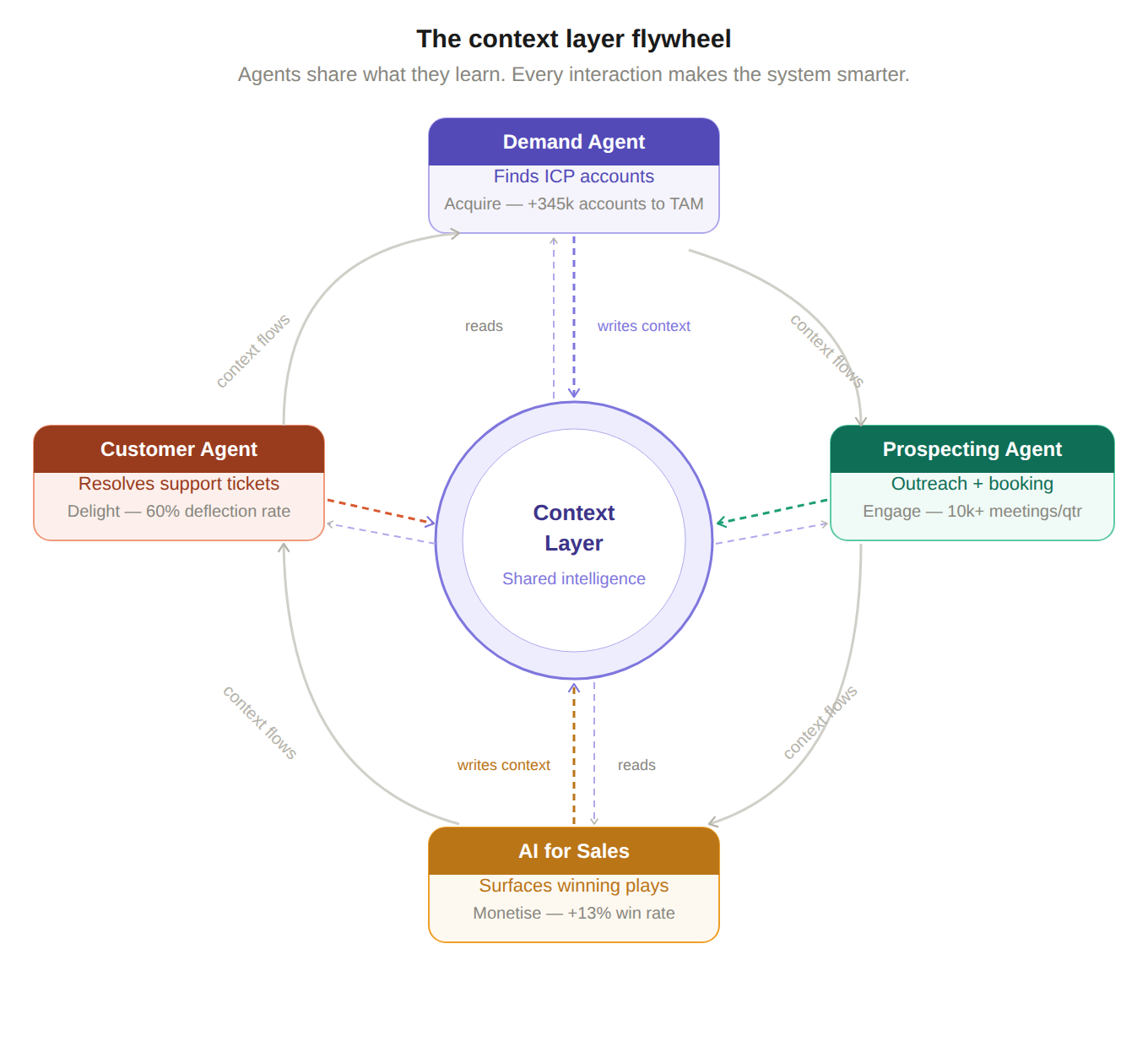

Context Layer. Where customer understanding lives. Not just structured CRM data, but unstructured data: call transcripts, email threads, support conversations, usage patterns, sales stage history. This is the foundation. When the Demand Agent identifies an account, that context flows to the Prospecting Agent. When the Prospecting Agent generates outreach, deal context flows to our Sale agent. When the support agent resolves a ticket, that context is available to customer success. Context needs to be shared across agents.

Action Layer. Where work gets done. Your agents, automations, and assistants. These are only as good as the context they have access to. An agent with a rich context layer is operating with years of account history on every interaction. An agent without it is starting from scratch every time.

Coordination Layer. Where humans and agents collaborate. Governance. Routing rules. Handoff protocols. Feedback loops. This is where adoption either happens or doesn’t. We learned this the hard way: naming matters, workflow integration matters, and rep understanding of what the agent is doing and why matters. We use tools like Braintrust to evaluate and improve agent outputs continuously, which is part of what made adoption stick. The best-designed agent fails if the team does not trust it or cannot see what it is doing.

Most companies start with the Action Layer. Build an agent. Deploy it. Measure it. That is the rational starting point, and there is nothing wrong with it.

The companies sustaining results built the Context Layer first, or in parallel. This is the rebuilt factory floor. Not the agents themselves, the infrastructure the agents run on. Agents in silos plateau. Agents with shared context compound.

The objection I’d have if I were reading this

“This is HubSpot-scale infrastructure. We don’t have those resources.”

Fair. Let me be direct.

You are not going to build this stack in a quarter. But the sequencing is learnable, and the principles hold regardless of scale.

Start with support. The feedback loops are tightest, the ROI is clearest, and organisational confidence in AI compounds from there. A support agent resolving 60% of tickets without human involvement is a legible win that earns you the credibility to go further.

Invest in data quality before you invest in agents. Every team seeing the highest returns from agents made this investment first.

Measure the slope, not the launch. Every agent in an effective system started with modest results. The teams that shut them down after 30 days missed the curve. The teams that kept improving them quarter over quarter are the ones now running at 82% automation on inbound, booking 10,000 additional meetings via their Prospecting agent.

Orchestrate across channels, not within one. Email alone does not work. Calls alone do not work. The combination, agents handling timing and personalisation, humans handling the conversation, is what works.

Build the Context Layer first. Or at least in parallel. The compounding comes from shared intelligence. Without it, you are running features, not a system.

Treat adoption as a product problem. The best-designed agent fails if the team does not trust it or cannot see what it is doing. Getting humans to act on agent outputs is as important as building the agents themselves.

The pattern recognition question

In 2013, Blake Bartlett at OpenView Partners was watching a handful of SaaS companies behave strangely. Datadog. Expensify. A few others. They were growing fast, with almost no outbound sales motion. By every rule of the VC playbook, they should have been struggling.

What Bartlett eventually understood was that the product itself was doing the selling. Not passively, but structurally. These companies had removed so much friction from time-to-value that users became the primary acquisition channel. The sales funnel had not disappeared. It had inverted.

He did not formally name the pattern “Product-Led Growth” until 2016. By then, Slack had a $2.8B valuation with almost no traditional sales organisation. Dropbox had proven that consumer-grade UX could drive B2B adoption at scale.

The term gave coherent language to something that had been happening for three years without a name. And once it had a name, every serious GTM leader had to have a position on it.

The pattern for Agentic-Led Growth is the same. The companies building it are seeing results that are now documented and public. The naming moment is happening now.

The question is the same one it was with PLG: are you in the early-adoption cohort that builds the infrastructure before the playbook is obvious, or the second-wave cohort that adopts once it’s proven?

Neither answer is wrong. The second-wave cohort with PLG still won. But the companies who understood what Bartlett was pointing at in 2013 had a three-year head start on the compounding.

The factories that replaced the steam engine with an electric motor eventually caught up too. But the ones that rebuilt the floor first? They compounded while everyone else was still figuring out the wiring.

Think about where your GTM engine is today. Which of those three layers do you actually have? Where does your context live? What agents are you running, and what are they connected to?

And, just start. We’re still early. Every iteration and rep you earn from moving towards a true agentic gtm is invaluable data you’re collecting on what works and what doesn’t work.

Until Next Time,

Happy AI’fying

Kieran